Llama 2

This model was released on 2023-07-18 and added to Hugging Face Transformers on 2023-07-18.

Llama 2

Section titled “Llama 2”Llama 2 is a family of large language models, Llama 2 and Llama 2-Chat, available in 7B, 13B, and 70B parameters. The Llama 2 model mostly keeps the same architecture as Llama, but it is pretrained on more tokens, doubles the context length, and uses grouped-query attention (GQA) in the 70B model to improve inference.

Llama 2-Chat is trained with supervised fine-tuning (SFT), and reinforcement learning with human feedback (RLHF) - rejection sampling and proximal policy optimization (PPO) - is applied to the fine-tuned model to align the chat model with human preferences.

You can find all the original Llama 2 checkpoints under the Llama 2 Family collection.

The example below demonstrates how to generate text with Pipeline, AutoModel, and how to chat with Llama 2-Chat from the command line.

import torchfrom transformers import pipeline

pipeline = pipeline( task="text-generation", model="meta-llama/Llama-2-7b-hf", dtype=torch.float16, device=0)pipeline("Plants create energy through a process known as")import torchfrom transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained( "meta-llama/Llama-2-7b-hf",)model = AutoModelForCausalLM.from_pretrained( "meta-llama/Llama-2-7b-hf", dtype=torch.float16, device_map="auto", attn_implementation="sdpa")input_ids = tokenizer("Plants create energy through a process known as", return_tensors="pt").to(model.device)

output = model.generate(**input_ids, cache_implementation="static")print(tokenizer.decode(output[0], skip_special_tokens=True))transformers chat meta-llama/Llama-2-7b-chat-hf --dtype auto --attn_implementation flash_attention_2Quantization reduces the memory burden of large models by representing the weights in a lower precision. Refer to the Quantization overview for more available quantization backends.

The example below uses torchao to only quantize the weights to int4.

# pip install torchaoimport torchfrom transformers import TorchAoConfig, AutoModelForCausalLM, AutoTokenizer

quantization_config = TorchAoConfig("int4_weight_only", group_size=128)model = AutoModelForCausalLM.from_pretrained( "meta-llama/Llama-2-13b-hf", dtype=torch.bfloat16, device_map="auto", quantization_config=quantization_config)

tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-2-13b-hf")input_ids = tokenizer("Plants create energy through a process known as", return_tensors="pt").to(model.device)

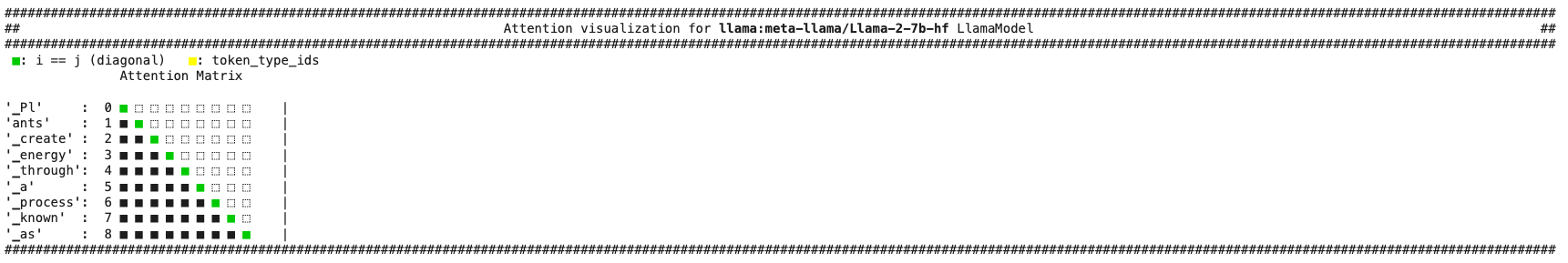

output = model.generate(**input_ids, cache_implementation="static")print(tokenizer.decode(output[0], skip_special_tokens=True))Use the AttentionMaskVisualizer to better understand what tokens the model can and cannot attend to.

from transformers.utils.attention_visualizer import AttentionMaskVisualizer

visualizer = AttentionMaskVisualizer("meta-llama/Llama-2-7b-hf")visualizer("Plants create energy through a process known as")

-

Setting

config.pretraining_tpto a value besides1activates a more accurate but slower computation of the linear layers. This matches the original logits better. -

The original model uses

pad_id = -1to indicate a padding token. The Transformers implementation requires adding a padding token and resizing the token embedding accordingly.tokenizer.add_special_tokens({"pad_token":"<pad>"})# update model config with padding tokenmodel.config.pad_token_id -

It is recommended to initialize the

embed_tokenslayer with the following code to ensure encoding the padding token outputs zeros.self.embed_tokens = nn.Embedding(config.vocab_size, config.hidden_size, self.config.padding_idx) -

The tokenizer is a byte-pair encoding model based on SentencePiece. During decoding, if the first token is the start of the word (for example, “Banana”), the tokenizer doesn’t prepend the prefix space to the string.

-

Don’t use the

dtypeparameter infrom_pretrainedif you’re using FlashAttention-2 because it only supports fp16 or bf16. You should use Automatic Mixed Precision, set fp16 or bf16 toTrueif usingTrainer, or use torch.autocast.

LlamaConfig

Section titled “LlamaConfig”[[autodoc]] LlamaConfig

LlamaTokenizer

Section titled “LlamaTokenizer”[[autodoc]] LlamaTokenizer - get_special_tokens_mask - save_vocabulary

LlamaTokenizerFast

Section titled “LlamaTokenizerFast”[[autodoc]] LlamaTokenizerFast - get_special_tokens_mask - update_post_processor - save_vocabulary

LlamaModel

Section titled “LlamaModel”[[autodoc]] LlamaModel - forward

LlamaForCausalLM

Section titled “LlamaForCausalLM”[[autodoc]] LlamaForCausalLM - forward

LlamaForSequenceClassification

Section titled “LlamaForSequenceClassification”[[autodoc]] LlamaForSequenceClassification - forward