Llama

This model was released on 2023-02-27 and added to Hugging Face Transformers on 2023-03-16.

Llama is a family of large language models ranging from 7B to 65B parameters. These models are focused on efficient inference (important for serving language models) by training a smaller model on more tokens rather than training a larger model on fewer tokens. The Llama model is based on the GPT architecture, but it uses pre-normalization to improve training stability, replaces ReLU with SwiGLU to improve performance, and replaces absolute positional embeddings with rotary positional embeddings (RoPE) to better handle longer sequence lengths.

You can find all the original Llama checkpoints under the Huggy Llama organization.

The example below demonstrates how to generate text with Pipeline or the AutoModel, and from the command line.

import torchfrom transformers import pipeline

pipeline = pipeline( task="text-generation", model="huggyllama/llama-7b", dtype=torch.float16, device=0)pipeline("Plants create energy through a process known as")import torchfrom transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained( "huggyllama/llama-7b",)model = AutoModelForCausalLM.from_pretrained( "huggyllama/llama-7b", dtype=torch.float16, device_map="auto", attn_implementation="sdpa")input_ids = tokenizer("Plants create energy through a process known as", return_tensors="pt").to(model.device)

output = model.generate(**input_ids, cache_implementation="static")print(tokenizer.decode(output[0], skip_special_tokens=True))echo -e "Plants create energy through a process known as" | transformers run --task text-generation --model huggyllama/llama-7b --device 0Quantization reduces the memory burden of large models by representing the weights in a lower precision. Refer to the Quantization overview for more available quantization backends.

The example below uses torchao to only quantize the weights to int4.

# pip install torchaoimport torchfrom transformers import TorchAoConfig, AutoModelForCausalLM, AutoTokenizer

quantization_config = TorchAoConfig("int4_weight_only", group_size=128)model = AutoModelForCausalLM.from_pretrained( "huggyllama/llama-30b", dtype=torch.bfloat16, device_map="auto", quantization_config=quantization_config)

tokenizer = AutoTokenizer.from_pretrained("huggyllama/llama-30b")input_ids = tokenizer("Plants create energy through a process known as", return_tensors="pt").to(model.device)

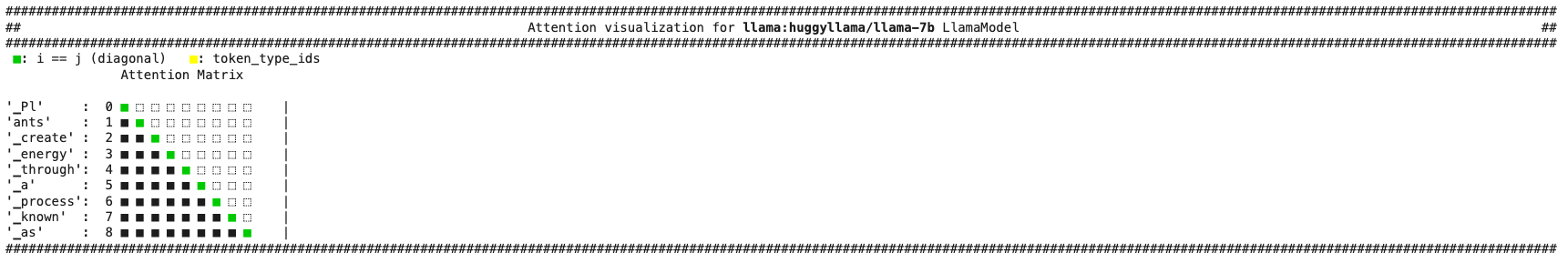

output = model.generate(**input_ids, cache_implementation="static")print(tokenizer.decode(output[0], skip_special_tokens=True))Use the AttentionMaskVisualizer to better understand what tokens the model can and cannot attend to.

from transformers.utils.attention_visualizer import AttentionMaskVisualizer

visualizer = AttentionMaskVisualizer("huggyllama/llama-7b")visualizer("Plants create energy through a process known as")

- The tokenizer is a byte-pair encoding model based on SentencePiece. During decoding, if the first token is the start of the word (for example, “Banana”), the tokenizer doesn’t prepend the prefix space to the string.

LlamaConfig

Section titled “LlamaConfig”[[autodoc]] LlamaConfig

LlamaTokenizer

Section titled “LlamaTokenizer”[[autodoc]] LlamaTokenizer - get_special_tokens_mask - save_vocabulary

LlamaTokenizerFast

Section titled “LlamaTokenizerFast”[[autodoc]] LlamaTokenizerFast - get_special_tokens_mask - update_post_processor - save_vocabulary

LlamaModel

Section titled “LlamaModel”[[autodoc]] LlamaModel - forward

LlamaForCausalLM

Section titled “LlamaForCausalLM”[[autodoc]] LlamaForCausalLM - forward

LlamaForSequenceClassification

Section titled “LlamaForSequenceClassification”[[autodoc]] LlamaForSequenceClassification - forward

LlamaForQuestionAnswering

Section titled “LlamaForQuestionAnswering”[[autodoc]] LlamaForQuestionAnswering - forward

LlamaForTokenClassification

Section titled “LlamaForTokenClassification”[[autodoc]] LlamaForTokenClassification - forward