EfficientLoFTR

This model was released on 2024-03-07 and added to Hugging Face Transformers on 2025-07-22.

EfficientLoFTR

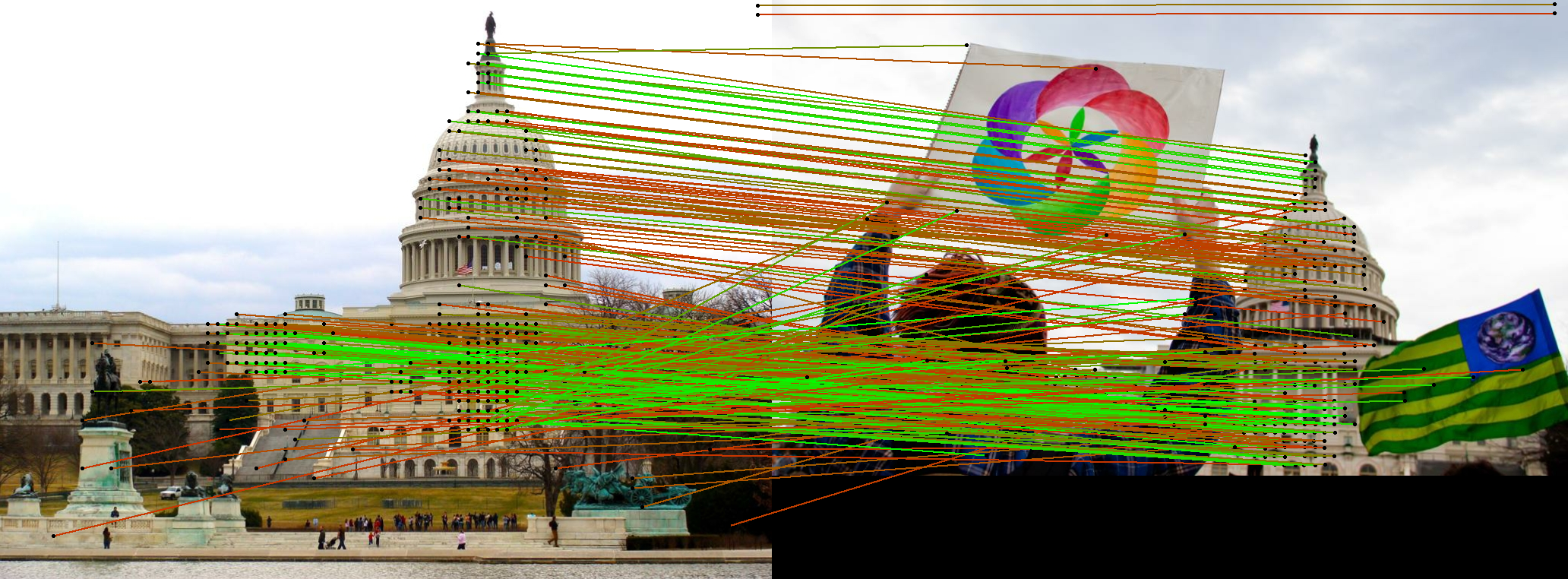

Section titled “EfficientLoFTR”EfficientLoFTR is an efficient detector-free local feature matching method that produces semi-dense matches across images with sparse-like speed. It builds upon the original LoFTR architecture but introduces significant improvements for both efficiency and accuracy. The key innovation is an aggregated attention mechanism with adaptive token selection that makes the model ~2.5× faster than LoFTR while achieving higher accuracy. EfficientLoFTR can even surpass state-of-the-art efficient sparse matching pipelines like SuperPoint + LightGlue in terms of speed, making it suitable for large-scale or latency-sensitive applications such as image retrieval and 3D reconstruction.

Click on the EfficientLoFTR models in the right sidebar for more examples of how to apply EfficientLoFTR to different computer vision tasks.

The example below demonstrates how to match keypoints between two images with Pipeline or the AutoModel class.

from transformers import pipeline

keypoint_matcher = pipeline(task="keypoint-matching", model="zju-community/efficientloftr")

url_0 = "https://raw.githubusercontent.com/magicleap/SuperGluePretrainedNetwork/refs/heads/master/assets/phototourism_sample_images/united_states_capitol_98169888_3347710852.jpg"url_1 = "https://raw.githubusercontent.com/magicleap/SuperGluePretrainedNetwork/refs/heads/master/assets/phototourism_sample_images/united_states_capitol_26757027_6717084061.jpg"

results = keypoint_matcher([url_0, url_1], threshold=0.9)print(results[0])# {'keypoint_image_0': {'x': ..., 'y': ...}, 'keypoint_image_1': {'x': ..., 'y': ...}, 'score': ...}from transformers import AutoImageProcessor, AutoModelForKeypointMatchingimport torchfrom PIL import Imageimport requests

url_image1 = "https://raw.githubusercontent.com/magicleap/SuperGluePretrainedNetwork/refs/heads/master/assets/phototourism_sample_images/united_states_capitol_98169888_3347710852.jpg"image1 = Image.open(requests.get(url_image1, stream=True).raw)url_image2 = "https://raw.githubusercontent.com/magicleap/SuperGluePretrainedNetwork/refs/heads/master/assets/phototourism_sample_images/united_states_capitol_26757027_6717084061.jpg"image2 = Image.open(requests.get(url_image2, stream=True).raw)

images = [image1, image2]

processor = AutoImageProcessor.from_pretrained("zju-community/efficientloftr")model = AutoModelForKeypointMatching.from_pretrained("zju-community/efficientloftr")

inputs = processor(images, return_tensors="pt")with torch.inference_mode(): outputs = model(**inputs)

# Post-process to get keypoints and matchesimage_sizes = [[(image.height, image.width) for image in images]]processed_outputs = processor.post_process_keypoint_matching(outputs, image_sizes, threshold=0.2)-

EfficientLoFTR is designed for efficiency while maintaining high accuracy. It uses an aggregated attention mechanism with adaptive token selection to reduce computational overhead compared to the original LoFTR.

from transformers import AutoImageProcessor, AutoModelForKeypointMatchingimport torchfrom PIL import Imageimport requestsprocessor = AutoImageProcessor.from_pretrained("zju-community/efficientloftr")model = AutoModelForKeypointMatching.from_pretrained("zju-community/efficientloftr")# EfficientLoFTR requires pairs of imagesimages = [image1, image2]inputs = processor(images, return_tensors="pt")with torch.inference_mode():outputs = model(**inputs)# Extract matching informationkeypoints = outputs.keypoints # Keypoints in both imagesmatches = outputs.matches # Matching indicesmatching_scores = outputs.matching_scores # Confidence scores -

The model produces semi-dense matches, offering a good balance between the density of matches and computational efficiency. It excels in handling large viewpoint changes and texture-poor scenarios.

-

For better visualization and analysis, use the

post_process_keypoint_matchingmethod to get matches in a more readable format.# Process outputs for visualizationimage_sizes = [[(image.height, image.width) for image in images]]processed_outputs = processor.post_process_keypoint_matching(outputs, image_sizes, threshold=0.2)for i, output in enumerate(processed_outputs):print(f"For the image pair {i}")for keypoint0, keypoint1, matching_score in zip(output["keypoints0"], output["keypoints1"], output["matching_scores"]):print(f"Keypoint at {keypoint0.numpy()} matches with keypoint at {keypoint1.numpy()} with score {matching_score}") -

Visualize the matches between the images using the built-in plotting functionality.

# Easy visualization using the built-in plotting methodvisualized_images = processor.visualize_keypoint_matching(images, processed_outputs) -

EfficientLoFTR uses a novel two-stage correlation layer that achieves accurate subpixel correspondences, improving upon the original LoFTR’s fine correlation module.

Resources

Section titled “Resources”- Refer to the original EfficientLoFTR repository for more examples and implementation details.

- EfficientLoFTR project page with interactive demos and additional information.

EfficientLoFTRConfig

Section titled “EfficientLoFTRConfig”[[autodoc]] EfficientLoFTRConfig

EfficientLoFTRImageProcessor

Section titled “EfficientLoFTRImageProcessor”[[autodoc]] EfficientLoFTRImageProcessor - preprocess - post_process_keypoint_matching - visualize_keypoint_matching

EfficientLoFTRImageProcessorFast

Section titled “EfficientLoFTRImageProcessorFast”[[autodoc]] EfficientLoFTRImageProcessorFast - preprocess - post_process_keypoint_matching - visualize_keypoint_matching

EfficientLoFTRModel

Section titled “EfficientLoFTRModel”[[autodoc]] EfficientLoFTRModel - forward

EfficientLoFTRForKeypointMatching

Section titled “EfficientLoFTRForKeypointMatching”[[autodoc]] EfficientLoFTRForKeypointMatching - forward