OneFormer

This model was released on 2022-11-10 and added to Hugging Face Transformers on 2023-01-19.

OneFormer

Section titled “OneFormer”

Overview

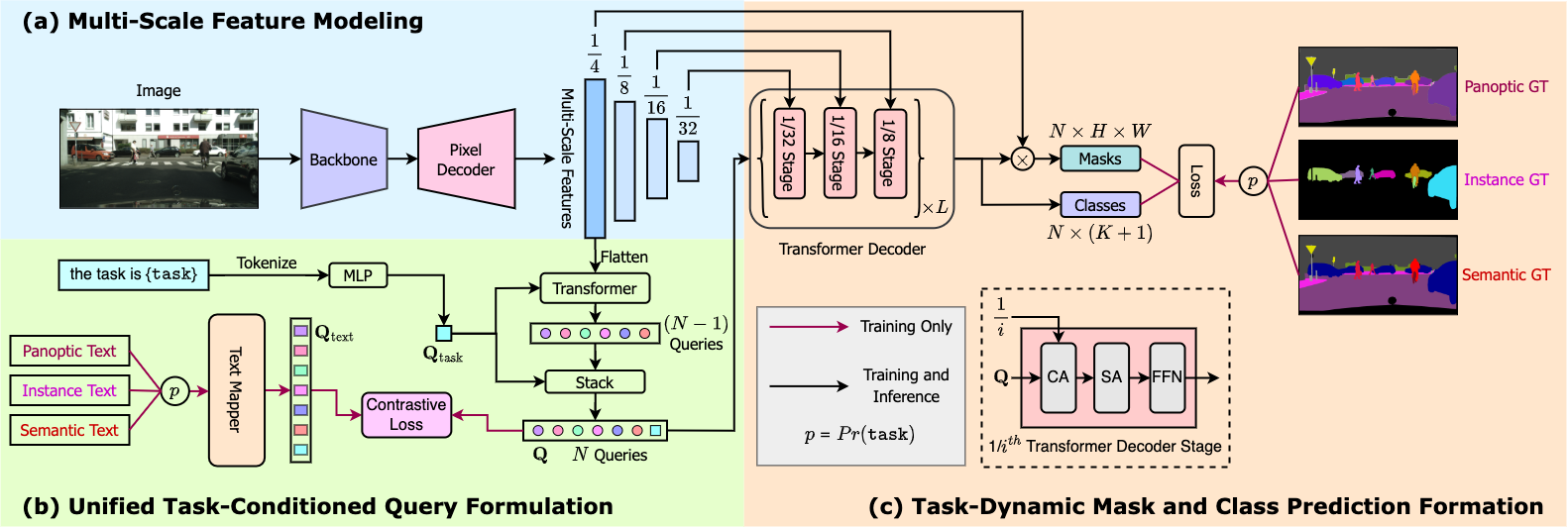

Section titled “Overview”The OneFormer model was proposed in OneFormer: One Transformer to Rule Universal Image Segmentation by Jitesh Jain, Jiachen Li, MangTik Chiu, Ali Hassani, Nikita Orlov, Humphrey Shi. OneFormer is a universal image segmentation framework that can be trained on a single panoptic dataset to perform semantic, instance, and panoptic segmentation tasks. OneFormer uses a task token to condition the model on the task in focus, making the architecture task-guided for training, and task-dynamic for inference.

The abstract from the paper is the following:

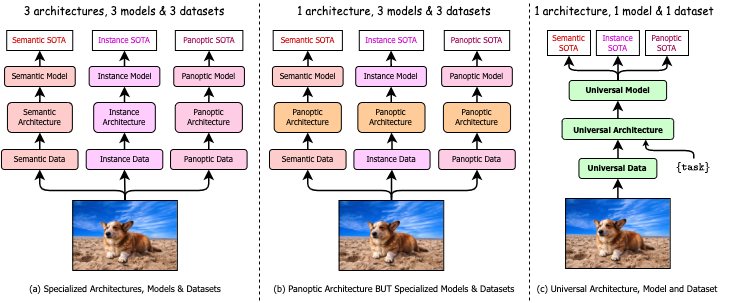

Universal Image Segmentation is not a new concept. Past attempts to unify image segmentation in the last decades include scene parsing, panoptic segmentation, and, more recently, new panoptic architectures. However, such panoptic architectures do not truly unify image segmentation because they need to be trained individually on the semantic, instance, or panoptic segmentation to achieve the best performance. Ideally, a truly universal framework should be trained only once and achieve SOTA performance across all three image segmentation tasks. To that end, we propose OneFormer, a universal image segmentation framework that unifies segmentation with a multi-task train-once design. We first propose a task-conditioned joint training strategy that enables training on ground truths of each domain (semantic, instance, and panoptic segmentation) within a single multi-task training process. Secondly, we introduce a task token to condition our model on the task at hand, making our model task-dynamic to support multi-task training and inference. Thirdly, we propose using a query-text contrastive loss during training to establish better inter-task and inter-class distinctions. Notably, our single OneFormer model outperforms specialized Mask2Former models across all three segmentation tasks on ADE20k, CityScapes, and COCO, despite the latter being trained on each of the three tasks individually with three times the resources. With new ConvNeXt and DiNAT backbones, we observe even more performance improvement. We believe OneFormer is a significant step towards making image segmentation more universal and accessible.

The figure below illustrates the architecture of OneFormer. Taken from the original paper.

This model was contributed by Jitesh Jain. The original code can be found here.

Usage tips

Section titled “Usage tips”- OneFormer requires two inputs during inference: image and task token.

- During training, OneFormer only uses panoptic annotations.

- If you want to train the model in a distributed environment across multiple nodes, then one should update the

get_num_masksfunction inside in theOneFormerLossclass ofmodeling_oneformer.py. When training on multiple nodes, this should be set to the average number of target masks across all nodes, as can be seen in the original implementation here. - One can use

OneFormerProcessorto prepare input images and task inputs for the model and optional targets for the model.OneFormerProcessorwrapsOneFormerImageProcessorandCLIPTokenizerinto a single instance to both prepare the images and encode the task inputs. - To get the final segmentation, depending on the task, you can call

post_process_semantic_segmentationorpost_process_instance_segmentationorpost_process_panoptic_segmentation. All three tasks can be solved usingOneFormerForUniversalSegmentationoutput, panoptic segmentation accepts an optionallabel_ids_to_fuseargument to fuse instances of the target object/s (e.g. sky) together.

Resources

Section titled “Resources”A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with OneFormer.

- Demo notebooks regarding inference + fine-tuning on custom data can be found here.

If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we will review it. The resource should ideally demonstrate something new instead of duplicating an existing resource.

OneFormer specific outputs

Section titled “OneFormer specific outputs”[[autodoc]] models.oneformer.modeling_oneformer.OneFormerModelOutput

[[autodoc]] models.oneformer.modeling_oneformer.OneFormerForUniversalSegmentationOutput

OneFormerConfig

Section titled “OneFormerConfig”[[autodoc]] OneFormerConfig

OneFormerImageProcessor

Section titled “OneFormerImageProcessor”[[autodoc]] OneFormerImageProcessor - preprocess - post_process_semantic_segmentation - post_process_instance_segmentation - post_process_panoptic_segmentation

OneFormerImageProcessorFast

Section titled “OneFormerImageProcessorFast”[[autodoc]] OneFormerImageProcessorFast - preprocess - post_process_semantic_segmentation - post_process_instance_segmentation - post_process_panoptic_segmentation

OneFormerProcessor

Section titled “OneFormerProcessor”[[autodoc]] OneFormerProcessor

OneFormerModel

Section titled “OneFormerModel”[[autodoc]] OneFormerModel - forward

OneFormerForUniversalSegmentation

Section titled “OneFormerForUniversalSegmentation”[[autodoc]] OneFormerForUniversalSegmentation - forward